Cooling of data centres has evolved from cooling small clusters of servers to giant server farms. While these modern large data centres are vital components of the information services economy, they consume a formidable amount of energy worldwide.

It’s been widely documented that the cooling systems – the chiller, humidifier and computer room air conditioning (Crac) units – account for 45% of the total energy consumption of a data centre, while the IT equipment accounts for 30%. This means that 1kWh consumed by the IT equipment requires another 1kWh of energy to drive the cooling and auxiliary systems.

From environmental and cost-efficiency perspectives, selecting a cooling method that can reduce this energy demand is clearly beneficial.

Traditionally, data centres have been air cooled with chillers, humidifiers and Crac units, in a variety of ‘cold aisle/hot aisle’ approaches that aren’t terribly efficient and that can result in hot spots within the data hall.

Over the years, server equipment has become more resilient and can now tolerate a greater range in temperature and humidity levels than older technology allowed. These days, legitimate alternatives to cooling are routinely considered in most data centre projects looking for greener and more efficient strategies.

Currently, in the northern hemisphere, air-cooling solutions are looking more towards free cooling, which works by using air from outside combined with reclaimed heat (winter) and evaporative cooling (summer) to provide the total cooling solution throughout the year.

Water-based cooling options are a more modern approach, and cool the inside of the servers by pumping cold water through pipes or plates. Water-cooled rack systems work well, but have an inherent risk of leaks. Understanding the cost drivers and benefits of each are crucial to advising clients effectively.

There are many factors that drive the selection of any particular option, not least capital and life-cycle costs, but also the location of the data centre and the feasibility of incorporating innovations such as free cooling or aquifer thermal energy storage (ATES).

Parameters that drive these decisions include the requirement for power usage efficiency (PUE) levels to hit planning stipulations, and for acoustics and total cost of ownership (TCO) levels to be optimised. PUE measures how efficiently the data centre uses input power – the larger the number the less efficient the solution. In addition, the selected method of supplying power and power resilience play a role in the PUE calculations – and, hence, in reality, the overall cooling and power solution combined is what is considered against the criteria to finalise the preferred solution for the client.

In this article, we are looking solely at the merits of three cooling solutions that are currently being used on projects, to ascertain the cost drivers of each and understand the cooling-only related costs. These are: air cooling by chiller and Crac units; air cooling by indirect air cooling (IAC) air handling plant; and chilled-water cooling derived from free-cooling, hybrid cooling towers with chiller assist.

Any power-supply solution, associated building works and main contractor prelims are excluded.

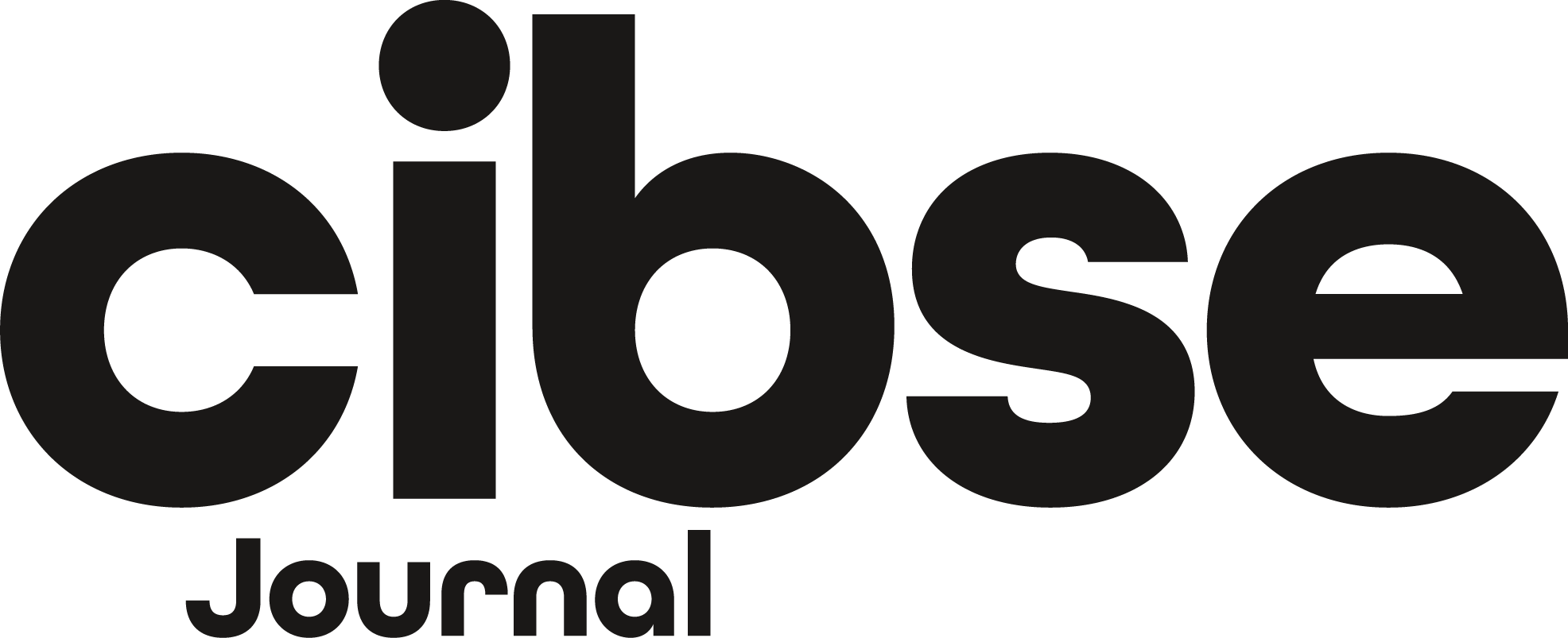

Air cooling by chiller and Crac units

Air cooling by chiller and Crac units

This chilled-water solution serves Crac downflow units typically serving cold air to the data hall white space through a floor void. Crac units normally include humidification elements to control the static electricity and all hot air is redirected back into the Crac to remove the heat for redistribution into the white space.

The source of the cooling water is via a traditional refrigeration chiller located externally, usually on the roof. There is no free cooling and chillers are sized for full peak load.

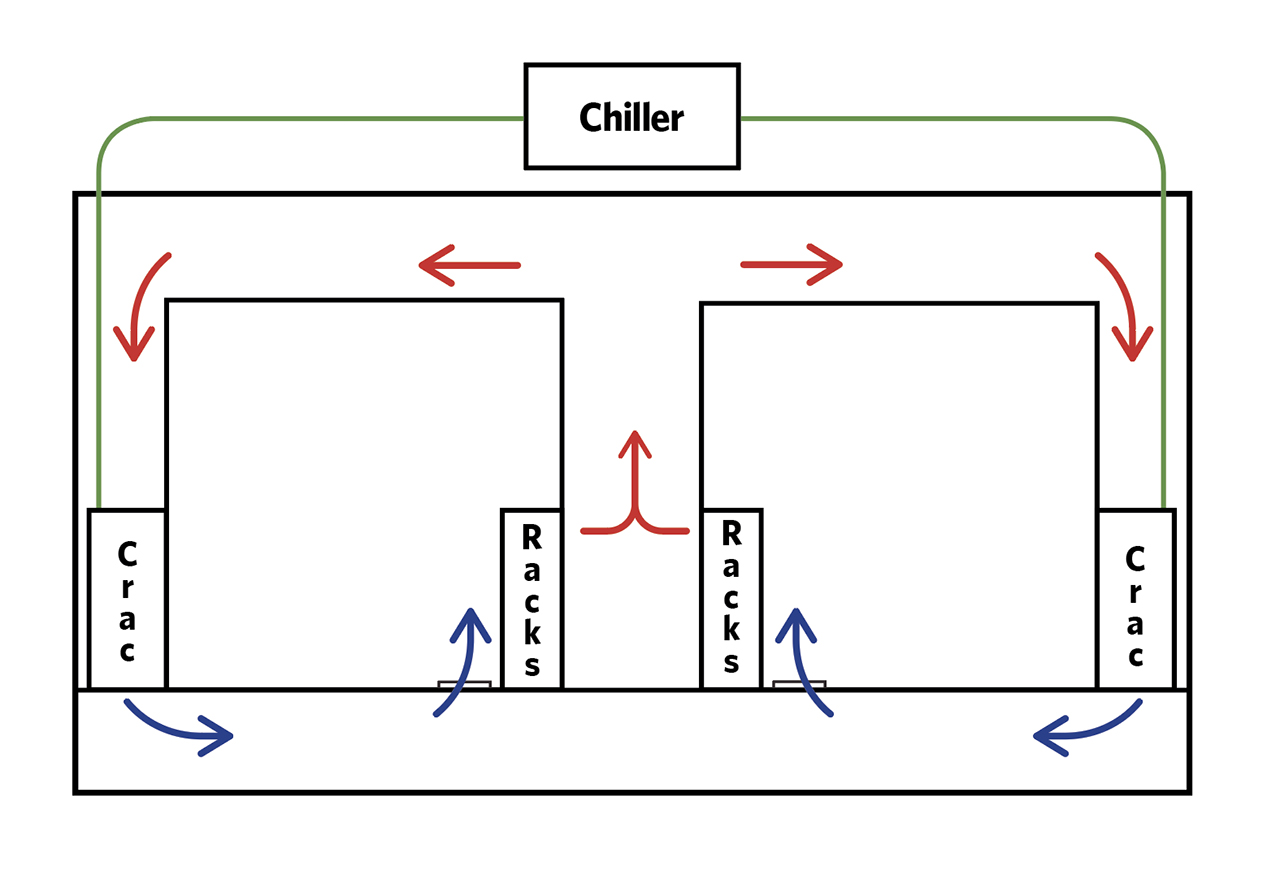

Air cooling by indirect air cooling AHU

Air cooling by indirect air-cooling AHU

This ‘all air’-based cooling solution incorporates air handling plant mounted externally to the white space. Treated air is distributed to the white space via ductwork or through a plenum. Air is supplied at a relatively low velocity to the cold aisle, giving more control than traditional floor-void distribution.

The hot air is returned to the IAC via ductwork and is cooled by the outdoor ambient air at a plate heat exchanger. To assist the cooling process during warm months, the ambient air is adiabatically cooled (water evaporation), which then cools the warm air at the plate heat exchanger in the IAC unit. The water used for adiabatic cooling is bulk-stored in the event of a mains supply outage. The process water is distributed from a central pump plantroom to the IAC units.

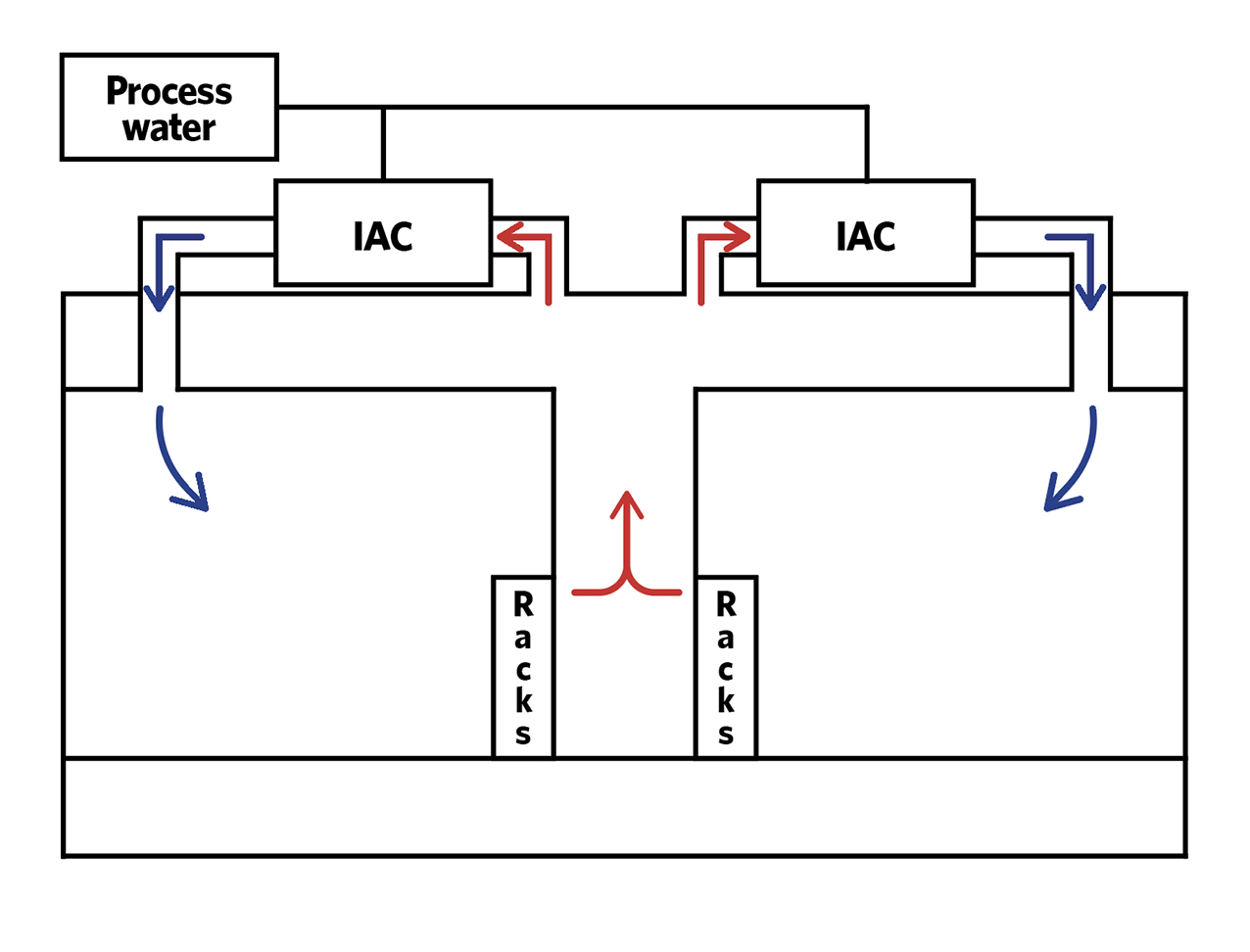

Chilled-water cooling derived from free-cooling, hybrid cooling towers with chiller assist

Chilled-water cooling derived from free-cooling hybrid cooling towers with chiller assist

This chilled-water solution serves Crac downflow units typically supplying cold air to the white space through a floor void. The source of the cooling water is via ‘free cooling’ cooling towers located externally, usually on the roof. Ambient air is used to cool the warm return water from the Crac units, with adiabatic cooling added during the warmer months.

At peak times, when approaching the towers’ cooling-load limits, refrigeration chillers are used to run in parallel with the cooling towers.

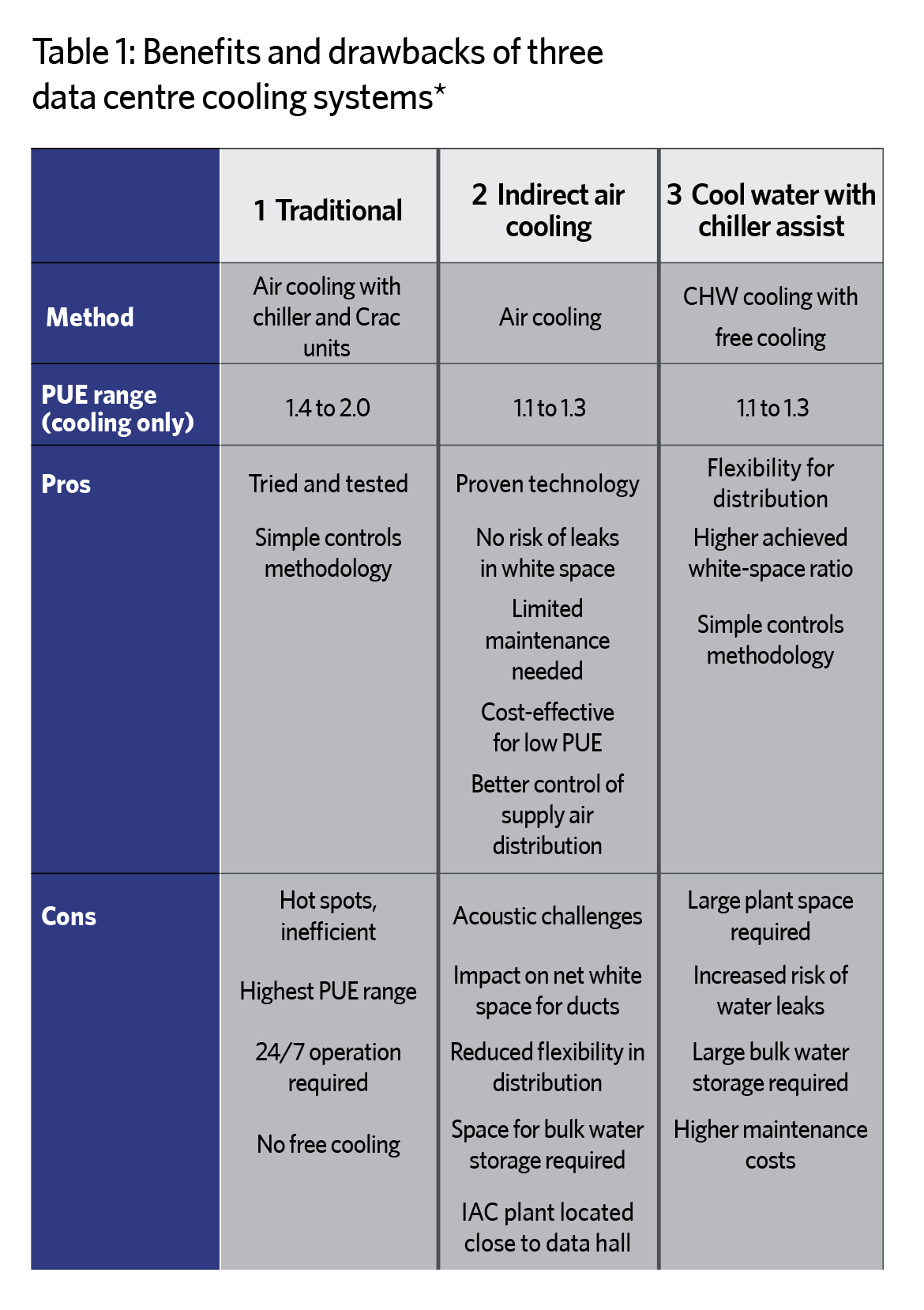

Table 1 is a summary of the pros and cons of each system. Bear in mind that numerous factors work in tandem within any given solution; for example, net-to-gross area, efficiency, power load, capital expenditure (capex) cost, and total cost of ownership (TCO) combine to determine the best solution for the client. Always make sure defined parameters are set to allow measurement of any solution against these critical factors. This will ensure the best-fit solution can be determined.

In reality, most data centres use air- or water-based cooling solutions, and this is where our cost comparison has focused. The future is already in place, however, with some clients opting for immersion cooling, by which servers are immersed into a liquid coolant for direct cooling of the electronic components. Immersing servers has been shown to improve rack density, cooling capacity and other design-critical factors.

Test projects where data centres are located in the sea could result in some significant changes in this industry in the future. Aecom is carrying out advisory work with Atlantis, which proposes to build a data centre on the site of its tidal-energy centre, off the coast of Scotland. It demonstrates how high-power-demand data centres could help fund the emerging tidal-power sector, thereby contributing to the future decarbonisation of the data centre sector.

Table 1: Benefits and drawbacks of three data centre cooling systems - All based on 1500W m-2 IT load density requirement; Tier III certification. Based on experience of designing data centres

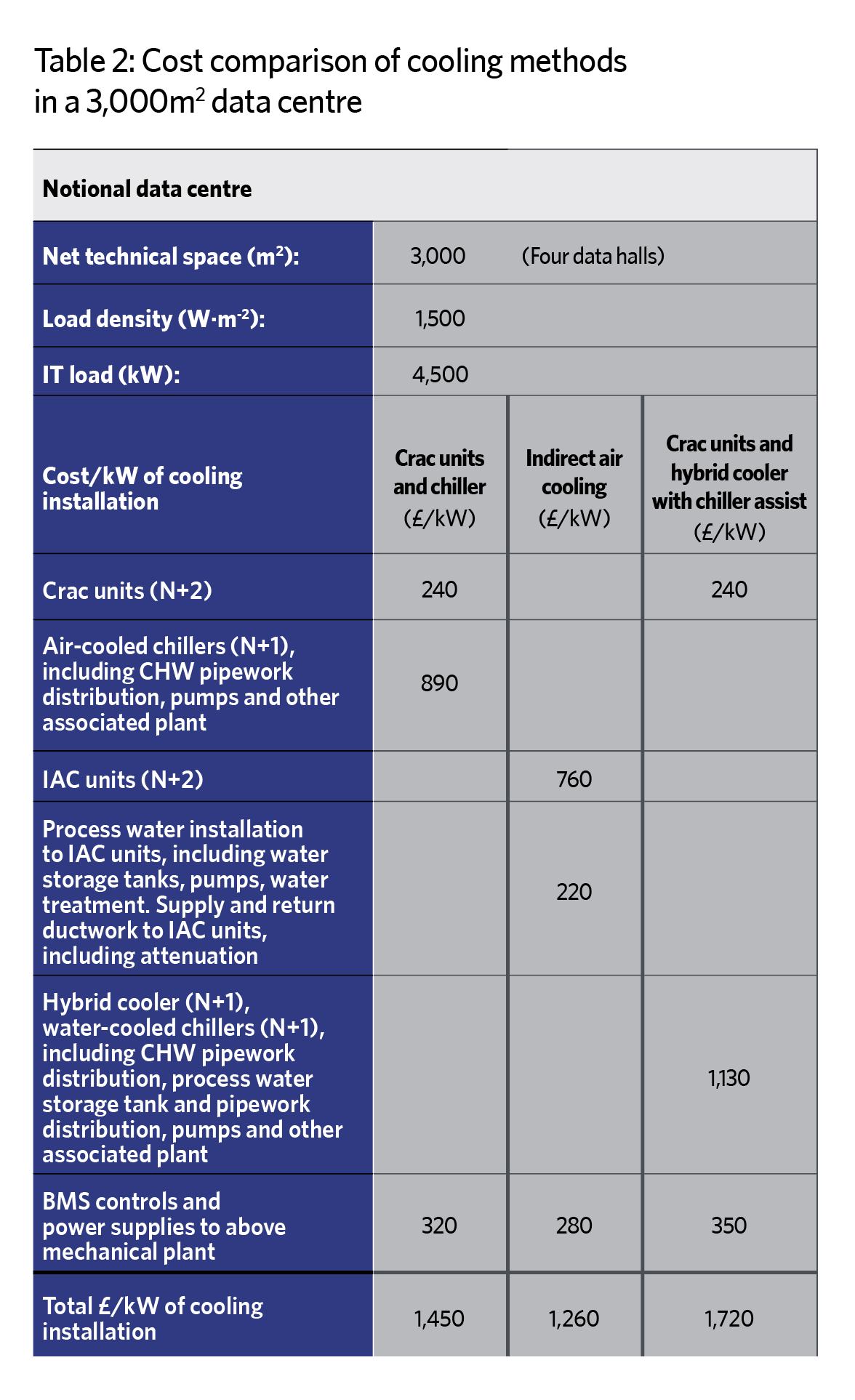

Table 2: Cost comparison of cooling methods in a 3,000m2 data centre.

Notes on the above cost:

● Hot/cold aisle containment is excluded

● Main contractor prelims and OHP are excluded

● Building/structural/architectural works, dedicated fresh air systems, and electrical infrastructure are excluded

About the authors

This article has been written by Associates Nichola Gradwell and James Garcia, of Aecom’s cost-management team in London, with assistance from Mike Starbuck and Anirban Basak, of Aecom’s engineering team.